![[Previous]](prev.gif) |

![[Contents]](contents.gif) |

![[Index]](keyword_index.gif) |

![[Next]](next.gif) |

![[Previous]](prev.gif) |

![[Contents]](contents.gif) |

![[Index]](keyword_index.gif) |

![[Next]](next.gif) |

To give QNX Neutrino a great degree of flexibility, to minimize the runtime memory requirements of the final system, and to cope with the wide variety of devices that may be found in a custom embedded system, the OS allows user-written processes to act as resource managers that can be started and stopped dynamically.

Resource managers are typically responsible for presenting an interface to various types of devices. This may involve managing actual hardware devices (like serial ports, parallel ports, network cards, and disk drives) or virtual devices (like /dev/null, a network filesystem, and pseudo-ttys).

In other operating systems, this functionality is traditionally associated with device drivers. But unlike device drivers, resource managers don't require any special arrangements with the kernel. In fact, a resource manager looks just like any other user-level program.

Since QNX Neutrino is a distributed, microkernel OS with virtually all nonkernel functionality provided by user-installable programs, a clean and well-defined interface is required between client programs and resource managers. All resource manager functions are documented; there's no "magic" or private interface between the kernel and a resource manager.

In fact, a resource manager is basically a user-level server program that accepts messages from other programs and, optionally, communicates with hardware. Again, the power and flexibility of our native IPC services allow the resource manager to be decoupled from the OS.

The binding between the resource manager and the client programs that use the associated resource is done through a flexible mechanism called pathname space mapping.

In pathname space mapping, an association is made between a pathname and a resource manager. The resource manager sets up this pathname space mapping by informing the process manager that it is the one responsible for handling requests at (or below, in the case of filesystems), a certain mountpoint. This allows the process manager to associate services (i.e. functions provided by resource managers) with pathnames.

For example, a serial port may be managed by a resource manager called devc-ser*, but the actual resource may be called /dev/ser1 in the pathname space. Therefore, when a program requests serial port services, it typically does so by opening a serial port -- in this case /dev/ser1.

Here are a few reasons why you may want to write a resource manager:

The API for communicating with the resource manager is for the most part, POSIX. All C programmers are familiar with the open(), read(), and write() functions. Training costs are minimized, and so is the need to document the interface to your server.

If you have many server processes, writing each server as a resource manager keeps the number of different interfaces that clients need to use to a minimum.

For example, suppose you have a team of programmers building your overall application, and each programmer is writing one or more servers for that application. These programmers may work directly for your company, or they may belong to partner companies who are developing addon hardware for your modular platform.

If the servers are resource managers, then the interface to all of those servers is the POSIX functions: open(), read(), write(), and whatever else makes sense. For control-type messages that don't fit into a read/write model, there's devctl() (although devctl() isn't POSIX).

Since the API for communicating with a resource manager is the POSIX set of functions, and since standard POSIX utilities use this API, the utilities can be used for communicating with the resource managers.

For instance, the tiny TCP/IP protocol module contains resource-manager code that registers the name /proc/ipstats. If you open this name and read from it, the resource manager code responds with a body of text that describes the statistics for IP.

The cat utility takes the name of a file and opens the file, reads from it, and displays whatever it reads to standard output (typically the screen). As a result, you can type:

cat /proc/ipstats

The resource manager code in the TCP/IP protocol module responds with text such as:

Ttcpip Sep 5 2000 08:56:16 verbosity level 0 ip checksum errors: 0 udp checksum errors: 0 tcp checksum errors: 0 packets sent: 82 packets received: 82 lo0 : addr 127.0.0.1 netmask 255.0.0.0 up DST: 127.0.0.0 NETMASK: 255.0.0.0 GATEWAY: lo0 TCP 127.0.0.1.1227 > 127.0.0.1.6000 ESTABLISHED snd 0 rcv 0 TCP 127.0.0.1.6000 > 127.0.0.1.1227 ESTABLISHED snd 0 rcv 0 TCP 0.0.0.0.6000 LISTEN

You could also use command-line utilities for a robot-arm driver. The driver could register the name, /dev/robot/arm/angle, and any writes to this device are interpreted as the angle to set the robot arm to. To test the driver from the command line, you'd type:

echo 87 >/dev/robot/arm/angle

The echo utility opens /dev/robot/arm/angle and writes the string ("87") to it. The driver handles the write by setting the robot arm to 87 degrees. Note that this was accomplished without writing a special tester program.

Another example would be names such as /dev/robot/registers/r1, r2,.... Reading from these names returns the contents of the corresponding registers; writing to these names sets the corresponding registers to the given values.

Even if all of your other IPC is done via some non-POSIX API, it's still worth having one thread written as a resource manager for responding to reads and writes for doing things as shown above.

Depending on how much work you want to do yourself in order to present a proper POSIX filesystem to the client, you can break resource managers into two types:

Device resource managers create only single-file entries in the filesystem, each of which is registered with the process manager. Each name usually represents a single device. These resource managers typically rely on the resource-manager library to do most of the work in presenting a POSIX device to the user.

For example, a serial port driver registers names such as /dev/ser1 and /dev/ser2. When the user does ls -l /dev, the library does the necessary handling to respond to the resulting _IO_STAT messages with the proper information. The person who writes the serial port driver can concentrate instead on the details of managing the serial port hardware.

Filesystem resource managers register a mountpoint with the process manager. A mountpoint is the portion of the path that's registered with the process manager. The remaining parts of the path are managed by the filesystem resource manager. For example, when a filesystem resource manager attaches a mountpoint at /mount, and the path /mount/home/thomasf is examined:

Here are some examples of using filesystem resource managers:

Once a resource manager has established its pathname prefix, it will receive messages whenever any client program tries to do an open(), read(), write(), etc. on that pathname. For example, after devc-ser* has taken over the pathname /dev/ser1, and a client program executes:

fd = open ("/dev/ser1", O_RDONLY);

the client's C library will construct an io_open message, which it then sends to the devc-ser* resource manager via IPC.

Some time later, when the client program executes:

read (fd, buf, BUFSIZ);

the client's C library constructs an io_read message, which is then sent to the resource manager.

A key point is that all communications between the client program and the resource manager are done through native IPC messaging. This allows for a number of unique features:

|

All QNX Neutrino device drivers and filesystems are implemented as resource managers. This means that everything that a "native" QNX Neutrino device driver or filesystem can do, a user-written resource manager can do as well. |

Consider FTP filesystems, for instance. Here a resource manager would take over a portion of the pathname space (e.g. /ftp) and allow users to cd into FTP sites to get files. For example, cd /ftp/rtfm.mit.edu/pub, would connect to the FTP site rtfm.mit.edu and change directory to /pub. After that point, the user could open, edit, or copy files.

Application-specific filesystems would be another example of a user-written resource manager. Given an application that makes extensive use of disk-based files, a custom tailored filesystem can be written that works with that application and delivers superior performance.

The possibilities for custom resource managers are limited only by the application developer's imagination.

Here is the heart of a resource manager:

initialize the dispatch interface

register the pathname with the process manager

DO forever

receive a message

SWITCH on the type of message

CASE io_open:

perform io_open processing

ENDCASE

CASE io_read:

perform io_read processing

ENDCASE

CASE io_write:

perform io_write processing

ENDCASE

. // etc. handle all other messages

. // that may occur, performing

. // processing as appropriate

ENDSWITCH

ENDDO

The architecture contains three parts:

This message-processing structure (the switch/case, above) is required for each and every resource manager. However, we provide a set of convenient library functions to handle this functionality (and other key functionality as well).

Architecturally, there are two categories of messages that a resource manager will receive:

A connect message is issued by the client to perform an operation based on a pathname (e.g. an io_open message). This may involve performing operations such as permission checks (does the client have the correct permission to open this device?) and setting up a context for that request.

An I/O message is one that relies upon this context (created between the client and the resource manager) to perform subsequent processing of I/O messages (e.g. io_read).

There are good reasons for this design. It would be inefficient to pass the full pathname for each and every read() request, for example. The io_open handler can also perform tasks that we want done only once (e.g. permission checks), rather than with each I/O message. Also, when the read() has read 4096 bytes from a disk file, there may be another 20 megabytes still waiting to be read. Therefore, the read() function would need to have some context information telling it the position within the file it's reading from.

In a custom embedded system, part of the design effort may be spent writing a resource manager, because there may not be an off-the-shelf driver available for the custom hardware component in the system.

Our resource manager shared library makes this task relatively simple.

If there are functions that the resource manager doesn't want to handle for some reason (e.g. a digital-to-analog converter doesn't support a function such as lseek(), or the software doesn't require it), the shared library will conveniently supply default actions.

There are two levels of default actions:

For more information on default actions, see the section on "Second-level default message handling" in this chapter.

Another convenient service that the resource manager shared library provides is the automatic handling of dup() messages.

Suppose that the client program executed code that eventually ended up performing:

fd = open ("/dev/device", O_RDONLY);

...

fd2 = dup (fd);

...

fd3 = dup (fd);

...

close (fd3);

...

close (fd2);

...

close (fd);

The client would generate an io_open message for the first open(), and then two io_dup messages for the two dup() calls. Then, when the client executed the close() calls, three io_close messages would be generated.

Since the dup() functions generate duplicates of the file descriptors, new context information should not be allocated for each one. When the io_close messages arrive, because no new context has been allocated for each dup(), no release of the memory by each io_close message should occur either! (If it did, the first close would wipe out the context.)

The resource manager shared library provides default handlers that keep track of the open(), dup(), and close() messages and perform work only for the last close (i.e. the third io_close message in the example above).

One of the salient features of QNX Neutrino is the ability to use threads. By using multiple threads, a resource manager can be structured so that several threads are waiting for messages and then simultaneously handling them.

This thread management is another convenient function provided by the resource manager shared library. Besides keeping track of both the number of threads created and the number of threads waiting, the library also takes care of maintaining the optimal number of threads.

The OS provides a set of dispatch_* functions that:

For more information, see the chapter on Writing a Resource Manager in the Programmer's Guide.

In order to conserve network bandwidth and to provide support for atomic operations, the OS supports combine messages. A combine message is constructed by the client's C library and consists of a number of I/O and/or connect messages packaged together into one.

For example, the function readblock() allows a thread to atomically perform an lseek() and read() operation. This is done in the client library by combining the io_lseek and io_read messages into one. When the resource manager shared library receives the message, it will process both the io_lseek and io_read messages, effectively making that readblock() function behave atomically.

Combine messages are also useful for the stat() function. A stat() call can be implemented in the client's library as an open(), fstat(), and close(). Instead of generating three separate messages (one for each of the component functions), the library puts them together into one contiguous combine message. This boosts performance, especially over a networked connection, and also simplifies the resource manager, which doesn't need a connect function to handle stat().

The resource manager shared library takes care of the issues associated with breaking out the individual components of the combine message and passing them to the various handler functions supplied. Again, this minimizes the effort associated with writing a resource manager.

Since a large number of the messages received by a resource manager deal with a common set of attributes, the OS provides another level of default handling. This second level, called the iofunc_*() shared library, allows a resource manager to handle functions like stat(), chmod(), chown(), lseek(), etc. automatically, without the programmer having to write additional code. As an added benefit, these iofunc_*() default handlers implement the POSIX semantics for the messages, again offloading work from the programmer.

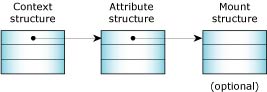

Three main structures need to be considered:

A resource manager is responsible for three data structures.

The first data structure, the context, has already been discussed (see the section on "Message types"). It holds data used on a per-open basis, such as the current position into a file (the lseek() offset).

Since a resource manager may be responsible for more than one device (e.g. devc-ser* may be responsible for /dev/ser1, /dev/ser2, /dev/ser3, etc.), the attributes structure holds data on a per-device basis. The attributes structure contains such items as the user and group ID of the owner of the device, the last modification time, etc.

For filesystem (block I/O device) managers, one more structure is used. This is the mount structure, which contains data items that are global to the entire mount device.

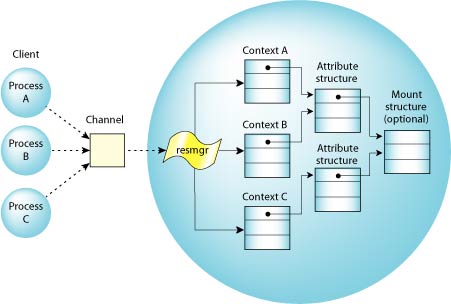

When a number of client programs have opened various devices on a particular resource, the data structures may look like this:

Multiple clients opening various devices.

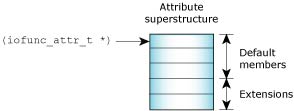

The iofunc_*() default functions operate on the assumption that the programmer has used the default definitions for the context block and the attributes structures. This is a safe assumption for two reasons:

By definition, the default structures must be the first members of their respective superstructures, allowing clean and simple access to the requisite base members by the iofunc_*() default functions:

Encapsulation.

The library contains iofunc_*() default handlers for these client functions:

chmod()

chown()

close()

devctl()

fpathconf()

fseek()

fstat()

lock()

lseek()

mmap()

open()

pathconf()

stat()

utime()

By supporting pathname space mapping, having a well-defined interface to resource managers, and providing a set of libraries for common resource manager functions, QNX Neutrino offers the developer unprecedented flexibility and simplicity in developing "drivers" for new hardware -- a critical feature for many embedded systems.

![[Previous]](prev.gif) |

![[Contents]](contents.gif) |

![[Index]](keyword_index.gif) |

![[Next]](next.gif) |